YouTube Is Giving Pedophiles The Best Way To Find The Videos They Want

Aadhya Khatri - Feb 20, 2019

YouTube is to blame for making a platform for pedophiles to connect and channel owners to make money out of child porn.

- YouTube AI Mistakes Black And White In Chess For Racism

- Young YouTuber Killed When Approaching Strangers With A Knife In A Prank Video

- YouTube Comments Not Loading? Here Is How You Can Fix It

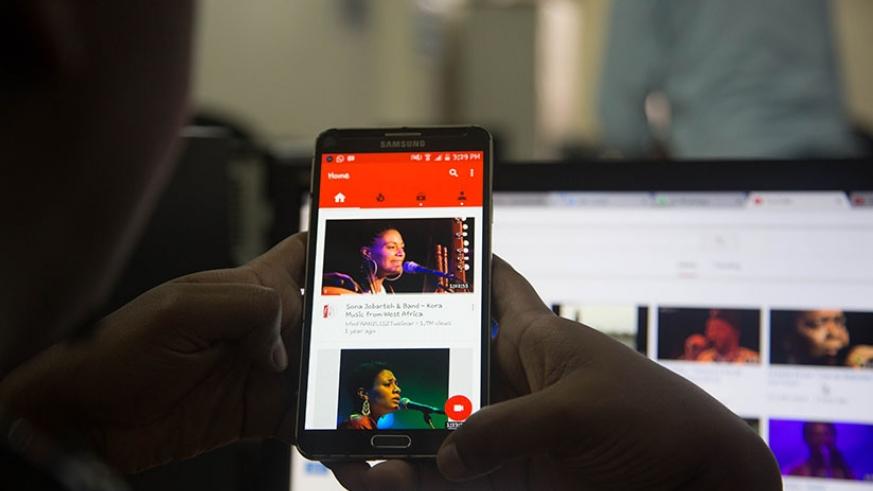

YouTube is a massive video sharing platform so it comes as no surprise that users can find almost anything there, even something that is against the law, and it seems like the platform is making things easier for users to find these toxic materials.

YouTube has an algorithm to suggest videos related to what users are watching. The downside of this act includes supporting conspiracy theories or radicalization. Recently, it received another accusation that says YouTube is helping child porn gets popular.

YouTube is fueling child porn with its algorithm

This matter was brought forward by Matt Watson. He talked about it in one of his videos that have as many as one million views when this article was written. He also pointed to several videos on the channel that show young girls who are inappropriately dressed. The most disturbing thing is these videos usually have more than 1 million views.

Many viewers include timestamps in their comments to point to when the girls are at sensitive positions as well as several shocking statements about them. The videos’ channels even encourage these disgusting comments and in the meantime, make money.

According to Watson, this YouTube’s algorithm accidentally helps pedophiles connect and share information. Even new accounts can find and see these videos after about 10 minutes of searching or fewer than 5 clicks.

It is not doing enough to address this matter

What YouTube is currently doing is to use another algorithm to detect and remove suspicious videos based on their tags or titles, which is far from effective to solve this issue. This is the reason why wrong accounts get banned sometimes and the owner must contact the platform’s human team to get them back.

This system also lets countless channels showing child porn slip through its fingers and get away. In the rare occasions it catches the right people, it is because a human team is working on the matter because users who have the motivation to report the wrongdoing are unlikely to search for them in the first place.

For now, YouTube ‘s weak effort has led to nowhere. The channel owners are not punished and pedophiles are conveniently receiving a list of exactly what they are looking for.

Featured Stories

ICT News - May 29, 2026

New Glenn Rocket Explodes in Massive Fireball During Static Fire Test at Cape...

Mobile - May 24, 2026

iOS 27 Preview: Apple Delivers Its Most Intelligent Siri Yet Alongside Fresh AI...

ICT News - May 08, 2026

Elon Musk Highlights Neuralink Breakthrough with New Surgical Robot for Brain...

ICT News - Apr 13, 2026

DDR4 RAM Prices Finally Fall After Soaring More Than 2,200 Percent

ICT News - Apr 06, 2026

Artemis II Crew Enters Moon's Gravitational Sphere on Historic Day 5

ICT News - Mar 31, 2026

DDR5 RAM Prices Finally Easing: Relief for PC Builders in 2026

ICT News - Mar 29, 2026

FTC Takes Action Against Debanking Practices by Major Financial Firms

ICT News - Mar 27, 2026

Palantir CTO Identifies Iran Conflict as First Large-Scale AI-Driven War

ICT News - Mar 24, 2026

OpenAI on the Brink: Major Setbacks Signal the Bursting of the AI Bubble

ICT News - Mar 20, 2026

Top 10 Most Popular Social Media Sites Based on User Count in 2026

Read more

ICT News- May 29, 2026

New Glenn Rocket Explodes in Massive Fireball During Static Fire Test at Cape Canaveral

The event underscores the high-stakes nature of rocket development, where even advanced systems can encounter unexpected challenges during ground testing.

Mobile- May 30, 2026

Xiaomi 17T Pro Excels as Telephoto Champion with Monster Battery Life

Xiaomi just dropped the 17T Pro and it immediately stands out in the crowded Android market.

Comments

Sort by Newest | Popular