Google AI Chips Gang Up To Speed Up Training Process

Dhir Acharya - May 09, 2019

According to the announcement during its I/O conference, Google will now allow combining various TPU chips to enhance the performance.

- AI's Role in Warfare: US Strikes on Iran Unveiled

- Elon Musk's Bold Chip Venture: Tesla's Massive Fab Initiative Sparks AI Hardware Competition

- Elon Musk's High-Stakes $109 Billion Lawsuit Against OpenAI and Microsoft

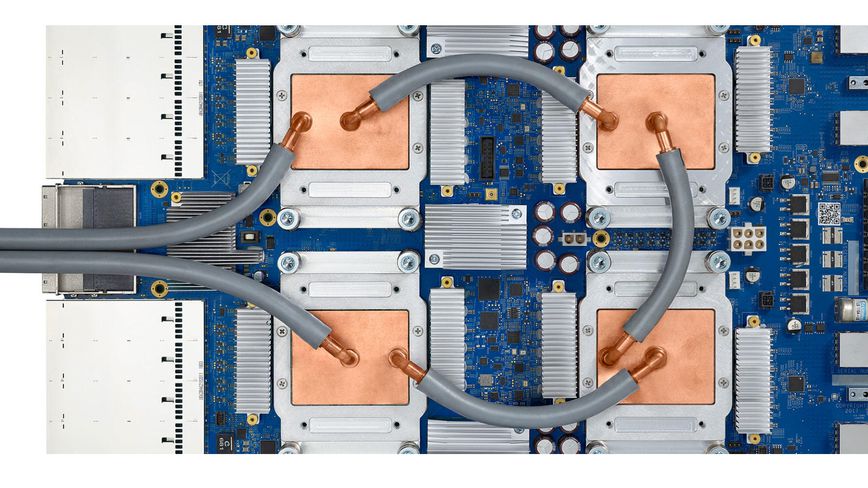

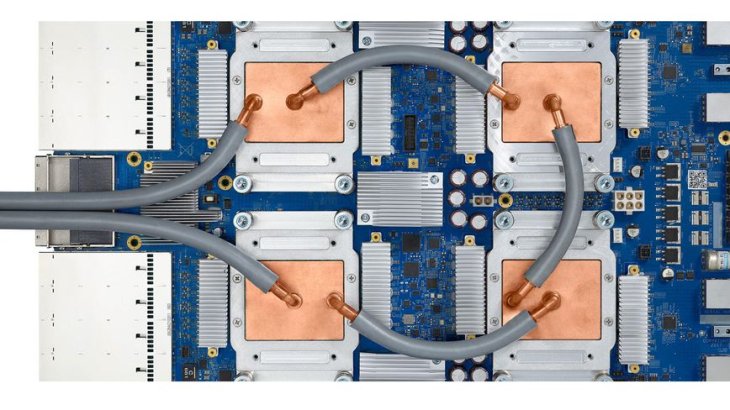

If you think that Google data centers are limiting your AI abilities, the tech giant - whose parent company is Alpahbet Inc. - will now allow you to combine various TPU (tensor processing unit) chips to enhance the performance.

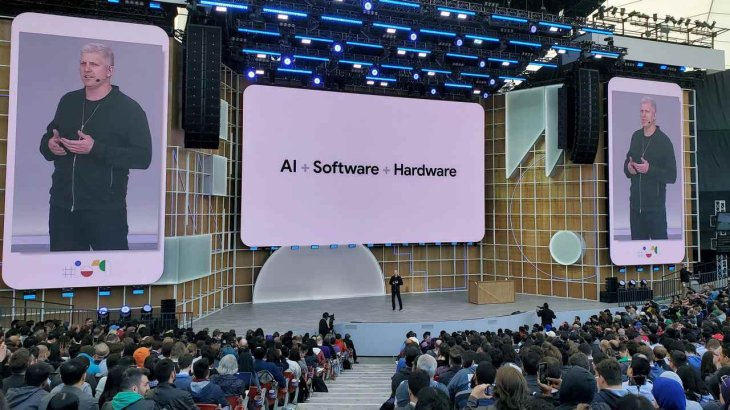

According to the company’s announcement during its I/O conference on Tuesday, the Google Cloud service now offers users with TPUs that are linked into “pods.” As a result, this will speed up the training phase of AI, mostly benefiting the process where AI systems learn to detect patterns in real-world data.

With the speedup of AI training thanks to such larger systems, customers can not only build more complex models but also update their models more regularly with new data.

Since the processor progress based on Moore’s Law has become less effective, lots of companies are shifting to make AI chips or integrate AI abilities into their existing processors. These tech firms include giants Apple and Google, as well as other chip powers such as Qualcomm, Intel, Nvidia, startups such as Flex Logix and Wave Computing, and finally other players such as Tesla, the car making company that has made its own AI chip to enable self-driving mode in the Model S, Model X and Model 3 automobiles.

At its annual I/O conference this year, Google extensively uses AI and showed off a lot of new uses for this technology. But this move is like what Facebook and Apple have done, pushing AI processing off the servers while integrating it into devices including home hubs and phones. This will both relieve Google’s servers and help better protect privacy.

Small devices can support AI tasks such as face and speech recognition. However, it requires massive computing systems to train AI, ones found at giant cloud players namely Google, Microsoft, and Amazon.

Featured Stories

Gadgets - Mar 08, 2026

Best Budget Keyboards of 2026

Gadgets - Feb 27, 2026

Top Budget-Friendly WiFi Routers for 2026

Gadgets - Feb 25, 2026

Top 4 Budget Rechargeable Wireless Mice

Gadgets - Feb 24, 2026

3 Budget Monitors That Reduce Eye Strain and Improve Productivity

Gadgets - Jul 21, 2025

COLORFUL Launches iGame Shadow II DDR5 Memory for AMD Ryzen 9000 Series

Gadgets - Jun 23, 2025

COLORFUL SMART 900 AI Mini PC: Compact Power for Content Creation

Review - Jun 18, 2025

Nintendo Switch 2 Review: A Triumphant Evolution Worth the Wait

Gadgets - Jun 18, 2025

Starlink: Why It’s a Big Deal for U.S. Internet in 2025

Gadgets - Jun 17, 2025

How Custom PC Setups Support India's Esports Athletes in Global Competition

Gadgets - Jun 12, 2025

Lava Prowatch Xtreme Launches with Google Fit Integration

Read more

Features- Mar 24, 2026

How to Use GeForce NOW to Play Video Games Without Actual Hardware

GeForce NOW makes PC gaming accessible to a wider audience by removing the barrier of expensive hardware.

ICT News- Mar 24, 2026

OpenAI on the Brink: Major Setbacks Signal the Bursting of the AI Bubble

The era of unchecked AI hype appears to be ending, and the bubble is finally bursting.

Comments

Sort by Newest | Popular