This AI Can Tell People's Feeling By Analyzing The Way They Walk

Ravi Singh - Aug 06, 2019

Researchers have made an AI that can categorize human emotion into four main types based on the way they walk

- New ‘Deep Nostalgia’ AI Allow Users To Bring Old Photos To Life

- Pilots Passed Out Mid-Flight, AI Software Got The Aircraft Back Up Automatically

- YouTube AI Mistakes Black And White In Chess For Racism

Our emotions do not only have an impact on our appetite or the frequency of our laugh or smile. They can also influence the way we walk. Researchers in the United States is now working on an idea of developing an Artificial Intelligence that can piece together how people feel based on the way they walk.

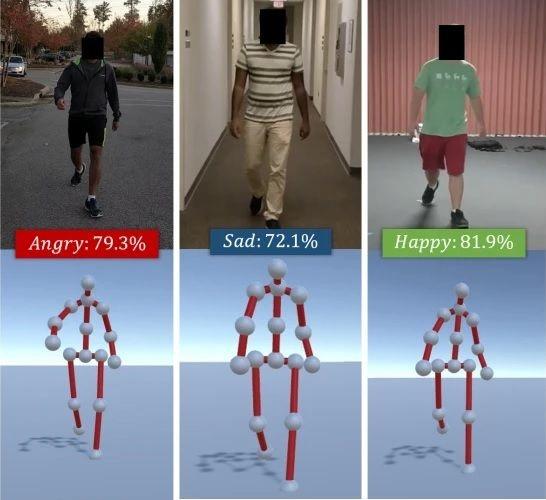

Scientists from the University of Maryland, College Park (UMD) and the University of North Carolina, Chapel Hill (UNC) have established deep learning algorithms for this purpose. This system is capable of analyzing videos of people waking, transforming them into 3D models, and extrapolating their gait. After that, a neural network will identify the main type of movement involved and the way it links to a specific emotion.

The model appears to have an ability to guess four different types of emotion, including angry, sad, neutral, and happy, with 80% accuracy.

Before this, we have seen AI that was trained to anticipate how people feel based on their facial expressions and voices. However, this is the first time an algorithm is developed to guess people's emotions just by analyzing the way they walk.

According to the Research Assistant Professor at the University of North Carolina - Chapel Hill, Aniket Bera, the AI does not necessarily figure out real emotion. Instead, it can predict feelings based on physical cues like when people try to anticipate others' expressions in daily life.

Bera also stated that we could use this research to teach robots to predict the emotion of everyone around them and from that adjust their behavior. On the other hand, it could help engineers in designing more human-like robots that better demonstrate their movements to express emotion.

For the next step, the team will start analyzing everything from running to gestures, which will help them better understand how people express emotions.

Featured Stories

Features - Jul 01, 2025

What Are The Fastest Passenger Vehicles Ever Created?

Features - Jun 25, 2025

Japan Hydrogen Breakthrough: Scientists Crack the Clean Energy Code with...

ICT News - Jun 25, 2025

AI Intimidation Tactics: CEOs Turn Flawed Technology Into Employee Fear Machine

Review - Jun 25, 2025

Windows 11 Problems: Is Microsoft's "Best" OS Actually Getting Worse?

Features - Jun 22, 2025

Telegram Founder Pavel Durov Plans to Split $14 Billion Fortune Among 106 Children

ICT News - Jun 22, 2025

Neuralink Telepathy Chip Enables Quadriplegic Rob Greiner to Control Games with...

Features - Jun 21, 2025

This Over $100 Bottle Has Nothing But Fresh Air Inside

Features - Jun 18, 2025

Best Mobile VPN Apps for Gaming 2025: Complete Guide

Features - Jun 18, 2025

A Math Formula Tells Us How Long Everything Will Live

Features - Jun 16, 2025

Comments

Sort by Newest | Popular